When the Referee Stops Blowing the Whistle

03 June, 2026 11:16AM by Greenbone AG

03 June, 2026 11:16AM by Greenbone AG

02 June, 2026 02:31PM by Steven Shiau

Some attacks steal data. Some attacks spy on users. And some attacks have only one goal: to make your service unavailable.

DDoS attacks fall into the latter category, and they are more common and more accessible to attackers than many organizations realize. They don’t require exploiting a specific vulnerability or gaining access to internal systems. All it takes is generating enough traffic to cause your infrastructure to collapse under its own weight.

What makes a modern DDoS attack particularly dangerous is not only the volume it can reach (attacks exceeding 2 Tbps have been recorded), but also the variety of forms it can take. A volumetric attack that saturates bandwidth is relatively easy to identify. However, an application-layer attack that mimics legitimate traffic and silently exhausts your server’s resources is far more difficult to detect and stop.

This article explains how DDoS attacks work, the different forms they take, the damage they have caused in real-world incidents, and the mitigation strategies that actually work.

A DDoS (Distributed Denial of Service) attack involves flooding a server, network, or application with more traffic than it can process, making it inaccessible to legitimate users.

The key word is distributed. Unlike a traditional DoS attack, which originates from a single source and is relatively easy to block, a DDoS attack is launched simultaneously from thousands or even millions of compromised devices. That means there is no single IP address to filter. The attack comes from everywhere at once.

With that in mind, what exactly is the difference between DoS and DDoS?

In a DoS attack, the traffic originates from a single source. In a DDoS attack, however, malicious traffic comes from a distributed network of malware-infected devices that the attacker controls remotely. These networks are known as botnets. That distribution is what makes DDoS attacks an entirely different challenge.

As mentioned earlier, most DDoS attacks are carried out through botnets, which may consist of personal computers, servers, IP cameras, home routers, IoT devices running default credentials, and more. Any of these devices can become part of a botnet without their owners ever knowing, as attackers do not need to build the infrastructure themselves.

Botnets can be rented on underground marketplaces at prices that have dropped significantly in recent years. This has effectively democratized the ability to launch large-scale attacks: any actor with sufficient motivation and a modest budget can orchestrate a DDoS attack capable of overwhelming well-provisioned infrastructure.

Not all DDoS attacks operate at the same level. Depending on their objective, they target different layers:

This distinction is not merely academic. Each layer requires a different defense strategy, and misidentifying the type of attack you’re facing can lead to ineffective mitigation measures.

The objective is simple: consume all available bandwidth between the target system and the rest of the internet.

Massive traffic volumes are generated using techniques such as UDP floods, ICMP floods, and amplification attacks (DNS, NTP, Memcached), in which attackers exploit misconfigured servers that respond with packets far larger than the original requests.

A well-executed amplification attack can multiply the original traffic by factors of 10x, 50x, or even more. The attacker sends very little; the target receives an enormous amount.

These attacks do not aim to saturate bandwidth. Instead, they seek to exhaust the resources of servers or intermediary network devices, such as firewalls and load balancers, by exploiting the way communication protocols work.

The most well-known example is the SYN flood. Attackers initiate thousands of TCP connections by sending SYN packets but never complete the handshake. The server reserves resources for each pending connection, eventually filling its connection table until it can no longer accept new connections—including legitimate ones.

These are the most sophisticated and the most difficult to detect.

Rather than overwhelming the network, they generate HTTP or HTTPS requests that appear completely legitimate. The server processes them as though they came from real users until CPU, memory, or database resources are exhausted.

An HTTP flood can generate millions of GET or POST requests targeting a specific URL. Slowloris attacks open multiple connections and keep them active by intermittently sending partial headers, occupying connection slots without ever completing a request.

From the outside, the traffic may appear entirely normal. A traditional firewall cannot distinguish between a legitimate HTTP request and a malicious one. Mitigating these attacks requires deep inspection at the application layer.

The following DDoS attack examples show how denial-of-service campaigns have disrupted governments, stock exchanges, cloud providers, and some of the largest websites on the internet.

In March 2025, dozens of Spanish municipalities, provincial councils, and public-sector organizations saw their websites taken offline following a coordinated wave of denial-of-service attacks carried out by the group NoName057(16). Although no data was stolen, the impact was significant, leaving thousands of citizens without access to essential digital services.

AWS reported in its threat intelligence report that it had mitigated the largest DDoS attack recorded at the time: a 2.3 Tbps attack sustained over three days. The target was an unidentified customer, and the attack relied on CLDAP reflection techniques.

The scale of the incident highlights the fact that cloud infrastructure providers are themselves targets, and that the capacity of even the largest providers is not unlimited.

For several consecutive days in August 2020, the New Zealand Stock Exchange experienced disruptions severe enough to force the suspension of trading operations.

The attack was not particularly sophisticated from a technical perspective, but it was persistent and targeted an organization with an extremely low tolerance for downtime.

The incident illustrates how the criticality of the affected service can exponentially amplify the impact of an attack that, in a different context, might have been contained with far fewer consequences.

In February 2018, GitHub suffered the largest DDoS attack ever recorded at that point, peaking at 1.35 Tbps.

Attackers used Memcached amplification, exploiting internet-exposed caching servers to multiply traffic volumes on a massive scale.

GitHub was able to mitigate the attack within minutes by redirecting traffic to its scrubbing service. The incident delivered a clear lesson: even top-tier enterprise infrastructure can become a target, and response time is everything.

In October 2016, the Mirai botnet—made up primarily of security cameras and digital video recorders running default credentials—launched a massive attack against Dyn, one of the internet’s leading DNS providers.

The result was widespread outages affecting Twitter (now X), Netflix, Reddit, Spotify, and PayPal for several hours.

The attack revealed something many organizations had not fully considered: dependence on shared infrastructure as a risk vector. It did not matter how well each individual company protected its own systems. If the DNS provider resolving their domains went down, their services went down as well.

A DDoS attack is not just a technical problem for the IT team to solve. Its consequences affect the entire organization.

The most immediate consequence. For e-commerce businesses, financial services, and SaaS platforms, every minute of downtime carries a direct and measurable cost.

Users who cannot access a service do not always understand the cause. The perception of unreliability can erode trust even after service has been fully restored.

Mitigating an ongoing attack requires human and technical resources that organizations are rarely staffed for under normal circumstances. In many cases, it means bringing in emergency services or purchasing additional capacity at short notice.

In sectors such as finance, healthcare, and critical infrastructure, a prolonged outage may result in SLA breaches or non-compliance with availability requirements, potentially leading to regulatory penalties.

This is perhaps the most concerning—and least discussed—scenario: in some advanced attacks, the DDoS campaign is not the primary objective but rather a smokescreen designed to distract the security team while an intrusion is carried out elsewhere in the infrastructure.

While the team is focused on traffic alerts, someone is coming through another door.

Could your infrastructure withstand a Layer 7 DDoS attack?

Many organizations discover weaknesses only after an outage occurs.

Take our free infrastructure resilience assessment to identify potential availability and security gaps before attackers do.

Early detection can make the difference between a contained incident and a prolonged outage. The most common indicators include:

None of these symptoms alone confirms an attack. However, the combination of several indicators—especially when they appear suddenly and simultaneously—should trigger your incident response procedures.

There is no single solution that can protect against every type of DDoS attack. Effective mitigation requires a layered approach, where each defense mechanism complements the others.

Rate limiting establishes a maximum number of requests or connections allowed per IP address within a defined period of time.

It provides a useful first line of defense against floods and brute-force attacks, but it has clear limitations when facing distributed attacks originating from thousands of different IP addresses.

Filtering based on IP reputation, geolocation, or behavioral patterns helps block known malicious traffic before it reaches the application. Its effectiveness depends on the quality of the threat intelligence feeding the system and the ability to update that intelligence in real time.

Scrubbing services redirect traffic to dedicated filtering centers where malicious traffic is removed before reaching the target environment.

They are highly effective against large-scale volumetric attacks because they can absorb enormous traffic volumes through their global network capacity.

However, this model has limitations that organizations should understand:

For these organizations, an alternative approach is to deploy protection directly within their own infrastructure—at the network perimeter, in front of applications, with real-time inspection and response capabilities that do not depend on third-party intermediaries.

When defending against Layer 7 attacks, surface-level traffic inspection is not enough.

Organizations need visibility into request content, client behavior, and long-term access patterns.

A WAF (Web Application Firewall) performs deep inspection of HTTP/HTTPS requests and applies rules designed to identify anomalous behavior, including floods, aggressive scraping activity, and injection attempts disguised as DDoS traffic. Malicious requests are blocked before reaching the application.

An IPDS (Intrusion Prevention and Detection System) operates at the network level, identifying known attack signatures, traffic anomalies, and botnet-related behavior. It can automatically block or rate-limit suspicious traffic in real time.

In a modern Application Delivery Controller (ADC) architecture, DDoS mitigation typically occurs before traffic reaches backend servers.

When a DDoS pattern is detected, the platform can apply connection limits, reputation-based filtering, HTTP request inspection, and dedicated DoS protection rules to block malicious traffic in real time.

This architecture illustrates how these protection layers work together. In this example, the mitigation layer is implemented using SKUDONET.

When an IP address exceeds defined thresholds or exhibits behavior associated with botnets, requests are automatically blocked while legitimate traffic continues to reach backend servers.

An architecture with multiple entry points, distributed load balancing, and automatic failover capabilities reduces the impact of any attack.

If one node fails, traffic is redistributed. High availability is not just a performance enhancement—it is operational resilience.

Unnecessarily exposed services, unjustified open ports, internet-accessible Memcached servers, or publicly reachable DNS services can all be leveraged by attackers to launch or amplify attacks.

If something should not be exposed, it should not be accessible.

Many amplification attacks exploit vulnerable or misconfigured services.

Maintaining up-to-date systems and regularly auditing the configuration of exposed services significantly reduces the attack surface.

Do not wait until systems begin to fail before detecting an attack.

Establish a baseline for normal traffic patterns and configure alerts that trigger when those patterns are exceeded, leaving enough time to respond before the impact becomes irreversible.

When an attack begins, it is too late to decide what to do.

Teams should already know exactly which steps to follow: who to notify, which countermeasures to activate and in what order, how to communicate internally, and, when necessary, how to communicate with customers.

DDoS attacks have evolved from a form of digital protest into a systematic disruption tool used by actors driven by financial, competitive, or geopolitical motives.

They have evolved as well. Modern application-layer attacks are quieter, harder to distinguish from legitimate traffic, and often more effective than traditional volumetric floods while requiring far less traffic.

The response cannot be reactive.

An effective protection strategy combines architectural redundancy, early detection, multi-layer traffic filtering, and application-specific defenses. None of these measures is sufficient on its own, and relying exclusively on external cloud-based services introduces a single point of failure that recent incidents have shown to be very real.

Organizations responsible for critical infrastructure need to know exactly where their protection resides, who controls it, and what happens when that protection fails.

How Prepared Is Your Infrastructure?

DDoS attacks continue to grow in scale and sophistication, but the biggest risk is often not knowing whether your current architecture can withstand them.

Take the infrastructure assessment and receive an instant evaluation of your application’s resilience against availability and security threats.

A DoS attack originates from a single source. A DDoS attack uses a distributed network of compromised devices (a botnet) to generate the volume of traffic required to overwhelm a target.

That distributed nature is what makes DDoS attacks exponentially more difficult to stop using traditional defenses.

Partially.

A firewall can block known IP addresses and filter certain traffic patterns, but its capabilities are limited against large-scale volumetric attacks or Layer 7 attacks that mimic legitimate traffic.

Dedicated solutions are required, such as WAFs, IPDS platforms, scrubbing services, or a combination of all three.

A Layer 7 DDoS attack targets the application layer of the OSI model.

Rather than saturating bandwidth, it generates seemingly legitimate HTTP or HTTPS requests that consume application resources such as CPU, memory, and database connections.

These attacks are among the most difficult to detect because the traffic appears normal and requires deep application-layer inspection to be mitigated effectively.

Major cloud providers offer some level of built-in DDoS protection, but it is neither universal nor foolproof.

Sophisticated application-layer attacks can bypass these defenses. In addition, when the cloud provider itself becomes the target, the protection may become ineffective for all of its customers simultaneously.

A botnet is a network of compromised devices remotely controlled by an attacker.

In a DDoS attack, the botnet serves as the delivery mechanism: all devices send traffic to the target in a coordinated manner, generating the volume required to overwhelm it.

Today, IoT devices running default credentials remain one of the primary sources of botnet recruitment.

The answer depends on the duration of the attack, the industry, and the criticality of the affected services.

Beyond the direct cost of downtime, organizations must also consider remediation expenses, reputational damage, and—in regulated industries—potential penalties for failing to meet availability requirements.

Taken together, serious incidents often cost tens or hundreds of thousands of euros, and in some cases significantly more.

02 June, 2026 12:28PM by Isabel Perez

The 5th monthly Sparky project and donate report of the 2026: – Linux kernel updated up to 7.0.10, 6.18.33-LTS, 6.12.91-LTS – Sparky 8.3 of the stable line released Sparky of the rolling line will be released in June. Many thanks to all of you for supporting our open-source projects. Your donations help keeping them and us alive. Don’t forget to send a small tip in June too, please.

01 June, 2026 10:52AM by pavroo

Welcome to the latest Armbian Newsletter: your source for the latest developments, community highlights, and behind-the-scenes updates from the world of open-source ARM and RISC-V computing.

Armbian v26.5.1 delivers another strong round of improvements across the project, focusing on expanded hardware support, desktop and userland refinements, build framework modernization, and infrastructure enhancements. This release introduces new board images and platform updates, improves Ubuntu 26.04 "Resolute" integration, refines Bianbu desktop support, adds firmware and driver updates including AX210 wireless support, and continues ongoing work to strengthen the build system, CI pipelines, and developer tooling. Numerous kernel, bootloader, and device tree updates further improve stability, compatibility, and performance across a wide range of ARM and x86 platforms, reinforcing Armbian&aposs commitment to providing a reliable and flexible Linux distribution for single-board computers, embedded devices, and edge computing deployments.

Join us in making open source better! Every donation helps Armbian improve security, performance, and reliability — so everyone can enjoy a solid foundation for their devices.

30 May, 2026 04:09PM by Michael Robinson

Hello, Community!

The May development update is here. Despite the fact that we had to deal with a downpour of vulnerabilities such as Copy Fail, Dirty Frag, and others (they are all fixed in rolling and in emergency LTS release updates available to subscription holders now!), the VyOS team and community members still added quite a lot of new features and bug fixes this month.

They include a fix for the long-standing, very annoying bug that led to needless OpenVPN server restarts on config changes that only affected user settings that go to the client config dir, multiple new options for DHCPv4 and DHCPv6 servers, initial support for traffic engineering in segment routing, and more.

29 May, 2026 10:45AM by Daniil Baturin (daniil@sentrium.io)

28 May, 2026 11:48AM by t.lamprecht (invalid@example.com)

The debate around Digital Sovereignty is often framed as a contest between the United States and Europe, yet the underlying issue resonates far beyond these regions. Around the world, countries seek measurable control over government IT systems and data infrastructures while safeguarding citizens’ privacy and civil society. Their shared goal is to reclaim autonomy in a world where the “rules-based international order,” which once guaranteed security, reliability, and access, has eroded.

During my work with clients in Canada, I’ve seen how sovereignty serves as both a technological and diplomatic solution. By ensuring that the infrastructure managing data, identity, and public services remains under national control, so-called “middle powers,” like Canada, can shape their own fate. At this intersection, a geopolitical challenge becomes a technical one: when code is open, inspectable, and locally governed, nations can transition from mere consumers of technology to co‑creators of it.

On the surface, there seems to be little chance for controversy when it comes to satellite systems. Yet when companies like GHGSat monitor greenhouse gases and thus climate change, there is a real risk of running afoul of governments that want to hide pollution or simply don’t believe in climate change. Digital dependencies can quickly threaten a business. After all, there is no quicker way to force an organization to its knees than to cut it off from its email or logins.

Yet, it is not just the private sector that fears this overreach. Sovereign-oriented IT might look like a ministry of education operating thousands of schools through a federated, self-hosted identity system. Or a regional government integrating healthcare, transport, and licensing platforms under an Open Source identity framework that ensures privacy and legal compliance. Such implementations bring the principle of sovereignty to everyday life, making the abstract tangible through operational systems.

There is no project, big or small, that cannot gain some additional control. Even veritable institutions like the International Criminal Court must fear technological influence. The recent US sanctions against the ICC, as well as China’s push to control technology through foreign investment, underscore the need to place technological independence at the forefront of every business and organization.

Identity management sits at the heart of digital sovereignty. Whoever controls identity controls access, policy enforcement, and data visibility. Univention’s self‑hostable platform allows public and private entities to retain this control locally or within accountable institutions, rather than in opaque cloud infrastructure abroad.

Added transparency and accountability are especially important as geopolitical uncertainty deepens. In Canada, for instance, dependency on large U.S. cloud providers is declining in favor of open, sovereign-ready alternatives. Univention’s infrastructure enables governments, schools, and enterprises to unify digital identities across diverse applications while maintaining freedom of choice. Its customizable login portal and user interface not only streamline the IT landscape but symbolize practical sovereignty where compliance, flexibility, and consistency coexist.

Catalyzed by these Open Source solutions, many Canadian institutions are redesigning how they manage IT. Digital sovereignty, they’ve realized, isn’t a single product. It is an ecosystem. Identity management, as the key to access, data privacy, and integration, forms one of its essential pillars. The same realization now guides public sector digitization across the globe: nations modernizing education, healthcare, or citizen services must choose between proprietary, extra-territorial systems and open, sovereign architectures. The latter option grants local control and space for domestic innovation.

For Middle powers such as Canada, Germany, and South Korea, this local control and technological independence is especially important. Globally, these countries are neither dominant superpowers nor passive players, but influential states that depend heavily on global stability and trade. Historically, their geopolitical toolkit centered on diplomacy, development aid, and carefully measured defense partnerships. Yet in today’s fractured digital landscape, where data localization laws and cloud contracts touch the core of sovereignty, foreign policy increasingly overlaps with IT strategy.

The European Union exemplifies this evolution, defining digital sovereignty as the ability to control and make decisions about digital infrastructure without dependency on outside providers. The same logic drives every state seeking freedom of choice amid the U.S. and Chinese technological spheres. Open Source doesn’t erase these tensions, but it transforms their geometry. Governments and businesses can share resources on open foundations, avoid vendor lock-in, and retain the ability to adapt independently. In a world where agility matters more than scale, that flexibility is a crucial force multiplier.

No single country or organization will by default define the global digital future. Yet each organization faces a choice between outsourcing its critical systems and investing in the capacity to co‑architect shared infrastructure. Open Source tilts this equation toward cooperation and agency. It allows coalitions of users, whether nations, industries, or institutions, to co‑develop platforms aligned with their specific legal frameworks, cultures, and values.

As Canadian Prime Minister Mark Carney recently warned, the comfortable predictability of the old, rules‑based order has vanished. The replacement will be a network of shifting alliances, standards, and digital dependencies that evolve continuously. In this complex environment, technology decisions are inherently political. Every cloud migration, procurement contract, or identity system quietly reinforces one configuration of power over another.

In the technology sector, Open Source offers an antidote. It gives us transparency, flexibility, and collective stewardship. It lets nations, organizations, and individuals keep essential parts of that power within their regulatory reach and aligned with their values. By transforming conference slogans into working code maintained by accountable local teams, Open Source operationalizes digital sovereignty rather than leaving it rhetorical. For middle powers and others navigating between global giants, open architectures and sovereign-ready identity platforms remain among the last reliable levers to ensure autonomy in a world of contested connectivity.

Der Beitrag Digital Sovereignty and the Role of Open Source in a Fragmented World erschien zuerst auf Univention.

28 May, 2026 09:24AM by Kevin Dominik Korte

The first release candidate (RC) for Qubes OS 4.3.1 is now available for testing. This patch release aims to consolidate all the security patches, bug fixes, and other updates that have occurred since the release of Qubes 4.3.0.

That depends on the number of bugs discovered in this RC and their severity. As explained in our release schedule documentation, our usual process after issuing a new RC is to collect bug reports, triage the bugs, and fix them. If warranted, we then issue a new RC that includes the fixes and repeat the process. We continue this iterative procedure until we’re left with an RC that’s good enough to be declared the stable release. No one can predict with certainty, at the outset, how many iterations will be required (and hence how many RCs will be needed before a stable release), but we tend to get a clearer picture of this as testing progresses.

Since the changes between 4.3.0 and 4.3.1 are relatively minor, we currently don’t anticipate any major problems requiring a second RC. We currently expect to be able to publish the stable 4.3.1 release in one to two weeks.

If you’d like to help us test this RC, the best way to do so is by performing a clean installation with the new ISO. As always, we strongly recommend making a full backup beforehand and updating Qubes OS immediately afterward in order to apply all available bug fixes.

As an alternative to a clean installation, there’s also the option of performing an in-place upgrade without reinstalling. However, since Qubes 4.3.1 is a patch release, it’s essentially Qubes 4.3.0 inclusive of all updates to date, which largely amounts to just using a fully-updated 4.3.0 installation. By contrast, a clean installation covers other areas that could also benefit from testing, such as the installation procedure, which is why it’s the recommended testing method.

Regardless of your testing method, please help us improve the eventual stable release by reporting any bugs you encounter. If you’re an experienced user, we encourage you to join the testing team.

It is possible that templates restored in 4.3.1 from a pre-4.3 backup may continue to target their original Qubes OS release repos. This does not affect fresh templates on a clean 4.3.1 installation. For more information, see issue #8701.

View the full list of known bugs affecting Qubes 4.3 in our issue tracker.

A release candidate (RC) is a software build that has the potential to become a stable release, unless significant bugs are discovered in testing. RCs are intended for more advanced (or adventurous!) users who are comfortable testing early versions of software that are potentially buggier than stable releases. You can read more about Qubes OS supported releases and the version scheme in our documentation.

The Qubes OS Project uses the semantic versioning standard. Version numbers are written as [major].[minor].[patch]. Hence, we refer to releases that increment the third number as “patch releases.” A patch release does not designate a separate, new major or minor release of Qubes OS. Rather, it designates its respective major or minor release (in this case, 4.3) inclusive of all updates up to a certain point. See our supported releases for a comprehensive list of major and minor releases and our version scheme documentation for more information about how Qubes OS releases are versioned.

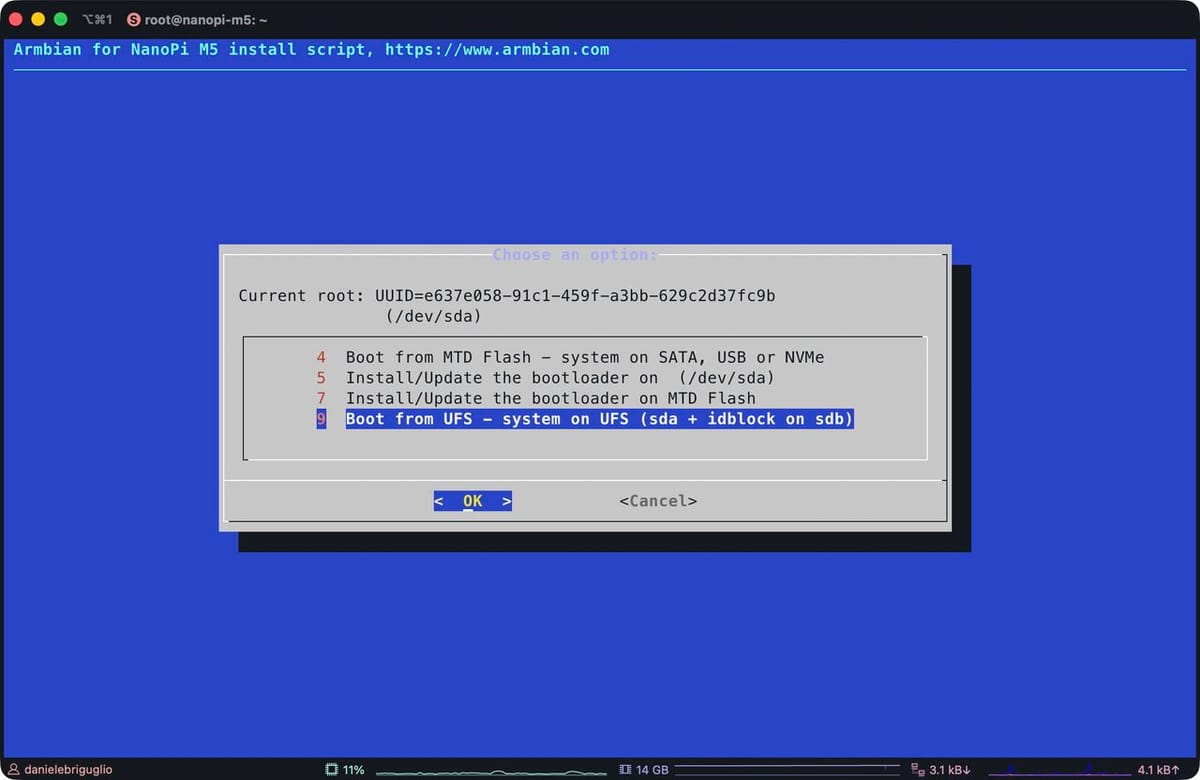

Armbian&aposs next release boots the FriendlyElec NanoPi M5 end-to-end from UFS on a mainline U-Boot, with no proprietary recovery image in the loop. It is the first RK3576 board in the catalogue to reach this state, and the integration pattern paves the way for the others.

UFS, the storage class that replaced eMMC in phones, is packet-based and full-duplex with command queuing. The practical gain over an SD card shows up in random I/O, in latency under concurrent load, and in write endurance that holds up over years of deployment. FriendlyElec ships UFS on the M5 because the RK3576 has a native UFS controller, a sensible choice for any board destined for kiosks, robots, or industrial gateways.

The UFS controller IP itself had a partial driver in mainline U-Boot. Everything around it was not wired: PHY init sequencing, the regulator rails the device needs before it responds, the device-tree glue that tells U-Boot "yes, this board has UFS." For the M5, none of it existed. There is also a cosmetic detail that catches every newcomer, namely that Rockchip&aposs loader tooling labels UFS as SATA in the RKDevTool storage dropdown. Flashing goes through upgrade_tool, not the more familiar rkdeveloptool, because rkdeveloptool has never had UFS support.

Three workstreams had to converge. Jonas Karlman&aposs kwiboo/rk3576 branch carried the upstream RK3576 U-Boot enablement, pinctrl, clocks, storage controller bindings, and has been merging into mainline through 2026. The rockchip-linux/rkbin tree had to ship a UFS-capable MiniLoader, which the RK3576MINIALL.ini recipe assembles from the DDR init, the UFS-aware loader, and the OP-TEE/ATF blobs. The Armbian side was the integration: a board config on U-Boot v2026.04, a U-Boot DT overlay that brings the UFS regulators and PHY up at the right moment, and a flashing path that upgrade_tool accepts. None of these were individually hard. Making them line up is what took the time.

On the device, the stack does its job cleanly. The BootROM reads the IDB off UFS and pulls in the TPL; the TPL initialises DDR and the UFS host controller, sequences the regulators, negotiates the link, and reads the next stage; ATF jumps to U-Boot; U-Boot enumerates ufs 0 and loads the kernel; the kernel re-probes the same controller it just booted through. The work, almost all of it, lived at the TPL stage. Controller fine, PHY fine, but the device sits silent if regulator sequencing is wrong by a handful of milliseconds. Once that is right, the upper layers see a clean SCSI-shaped block device and the rest is unsurprising.

For users with a UFS-equipped M5, the next release image flashes through the FriendlyElec Rockchip workflow with upgrade_tool, BOOT switch on UFS/SD, storage dropdown labelled "SATA". The board boots without an SD card or eMMC involvement, and armbian-install writes the same image to UFS in place once the system is running from another medium. Against microSD on the same hardware the difference is felt rather than benchmarked: small reads land faster, the system stays responsive under concurrent I/O, and the write endurance is in a different ballpark.

A few rough edges remain. The vendor tooling will keep calling UFS "SATA" until either Rockchip relabels the GUI or rkdeveloptool grows a cs ufs opcode, neither on the immediate horizon. The BOOT switch is a hardware gate with no software override. And upgrade_tool ships only as a Linux x86_64 ELF, so flashing from an Apple Silicon Mac means a Linux VM with USB passthrough or a Windows host running the GUI.

The same plumbing now unlocks every other UFS-equipped RK3576 board in the catalogue. The M5 reached the line first because the hardware was available and the upstream pieces were the most complete. The others should be substantially less work, now that the integration pattern exists and the loader path has been proven on real silicon.

27 May, 2026 03:14PM by Daniele Briguglio

This week&aposs work centers on board support expansion, kernel and U-Boot maintenance, and desktop and CI tooling refinements.

On the platform side, the Radxa Cubie A5E received Wi-Fi enablement and a kernel refresh as part of a broader update, while the youyeetoo YY3588 was promoted from CSC to standard support and the YY3568 gained PCIe NVMe functionality. The NanoPi R76S and Rock 5 ITX were both migrated to mainline U-Boot v2026.04, dropping vendor-branch gates, and the Vanxoak HD-RK3506-EVB was added with vendor and board imagery.

Kernel hygiene dominated the maintenance work: duplicate OPP labels on the Xiaoxin Pad Pro (sm8250) were corrected, broken UHS-I, xo-clock, SD, and DSI patches were removed from sm8550 trees for both 6.18 and 7.0, and a now-upstream r-spi backport was dropped from sunxi-6.18. The odroidxu4-current branch advanced to 6.6.141 across two successive bumps.

Desktop and infrastructure tooling saw layered improvements through configng: alsa-ucm-conf and libcamera/v4l userspace were added to the minimal tier, PackageKit and AppStream landed at the mid tier, and DE postinst scripts now execute in the build chroot to resolve missing wallpaper. UEFI x86 and arm64 desktop spins were switched to GNOME on the edge kernel, and build infrastructure gained inline ShellCheck PR feedback, scoped token permissions, fork-aware artifact gating, and event-driven runner cleanup via systemd hooks.

#Armbian #EmbeddedLinux #Rockchip #UBoot #KernelDevelopment

26 May, 2026 09:51PM by Michael Robinson

In application delivery environments, not every meaningful improvement needs to be disruptive.

A large part of maintaining stable and efficient infrastructures comes from continuously refining how systems behave in production: reducing operational friction, improving compatibility, and making administration more predictable for technical teams managing critical services.

That is the direction behind SKUDONET Enterprise Edition 10.2.0, a release focused on operational consistency, HTTPS flexibility, and incremental improvements across the ADC and WAF stack.

SKUDONET EE 10.2.0 introduces additional flexibility in HTTPS farm listener management, allowing HTTP/2 behaviour to be configured directly from the farm settings.

This enhancement simplifies the activation and management of modern HTTP delivery capabilities within HTTPS services, streamlining configuration across different application environments.

Designed for modern web applications and APIs, HTTP/2 improves connection efficiency through features such as request multiplexing and optimised resource delivery, helping reduce latency and improve responsiveness under concurrent traffic loads.

The new configuration approach allows administrators to manage listener behaviour more dynamically while maintaining the operational simplicity of HTTPS farms.

This release also includes several refinements in WebGUI sections that rely on picklist-based components.

While these changes may appear subtle, they have a direct impact on daily administration tasks, especially in environments with larger configurations or multiple managed objects.

The improvements focus on:

The goal is simple: to make administration more fluid without adding unnecessary complexity.

For technical teams working across multiple farms, services, or security policies, these kinds of usability refinements can significantly improve operational efficiency over time.

Session persistence management has also been improved in HTTP and HTTPS farms.

When a cookie domain is not explicitly configured, and a dynamic feature is enabled, SKUDONET can now automatically use the incoming request virtual host as the cookie domain.

This behaviour helps simplify deployments involving multiple domains or virtual hosts while reducing the need for additional manual configuration.

In practice, this makes multi-site and multi-tenant environments easier to manage and helps maintain more predictable persistence behaviour across distributed applications.

SKUDONET EE 10.2.0 also refines the behaviour associated with WAF rule movement actions.

The rule move process now correctly respects the selected administrative action, improving consistency when reorganising or managing security rules within the configuration.

For teams working with customised security policies, predictable rule management becomes especially important in order to maintain visibility and operational control across complex environments.

As part of its application security architecture, SKUDONET integrates WAF and IPDS capabilities directly into the ADC layer to help protect applications and APIs from modern web threats .

Another important refinement in this release affects URL handling within HTTP farms.

Previously, incoming request URLs could be automatically decoded before being forwarded to backend services. With 10.2.0, SKUDONET now preserves and forwards the original encoded URL exactly as received from the client.

Although technically small, this adjustment improves compatibility with applications and APIs that depend on encoded paths, special URL characters, or framework-specific routing behaviours.

In modern microservice architectures and API-driven environments, preserving request integrity can help avoid unexpected behaviours and simplify backend integrations.

SKUDONET EE 10.2.0 does not aim to reinvent the platform, but rather to continue refining how it behaves in real production environments.

From enhanced HTTPS flexibility to improvements in administration workflows, persistence handling, and backend compatibility, this release follows the same practical and progressive approach that defines the platform: reducing operational complexity without sacrificing control, performance, or security capabilities.

These are the kinds of improvements designed for teams that need predictable, stable, and easy-to-manage infrastructures in their day-to-day operations.

As part of its unified Application Delivery and Security approach, SKUDONET continues evolving as an ADC solution built for physical, virtual, cloud, and hybrid environments.

If you’re looking to improve the stability and security of your infrastructure without adding complexity, discover how SKUDONET adapts to physical, virtual, and cloud environments with a unified approach to Application Delivery—try it free for 30 days.

If you work with SKUDONET Enterprise Edition or want to stay up to date with the latest technical updates, visit our Timeline.

25 May, 2026 10:19AM by Isabel Perez

The latest Nubus for Kubernetes release improves observability: a new API endpoint provides metrics for operator dashboards, and additional information in the Management UI gives operators and administrators easy access to information that helps prevent or analyze incidents.

The REST API of the Univention Directory Manager (UDM) now includes a new endpoint that provides metrics about the Nubus deployment. The API has been designed to work best with Prometheus, the most commonly used implementation for collecting and storing metrics and providing them to dashboard solutions such as Grafana.

In the initial release, the metrics endpoint of the UDM REST API provides the following metrics:

In the initial release, the metrics endpoint of the UDM REST API provides the following metrics:

Operators can easily identify when user growth reaches critical levels, exceeds the license limits, or when the installed Nubus version is outdated. Thanks to the domain information, it is also easy to distinguish between multiple Nubus deployments in larger environments. Detailed information can be found in the metrics chapter of the Nubus Manual.

When analyzing configuration issues or end-user incidents, it is often necessary to access technical information used in the backends, such as the Univention Object Identifier introduced with Nubus for Kubernetes 1.10. To simplify the process of matching real names with technical identifiers for users, groups, and any other information stored in the directory service, Univention Nubus now includes these identifiers in the Web UI.

When analyzing configuration issues or end-user incidents, it is often necessary to access technical information used in the backends, such as the Univention Object Identifier introduced with Nubus for Kubernetes 1.10. To simplify the process of matching real names with technical identifiers for users, groups, and any other information stored in the directory service, Univention Nubus now includes these identifiers in the Web UI.

This is helpful in several scenarios: if a warning or error containing a technical identifier such as the Univention Object Identifier is logged in a backend service, administrators can now search for that identifier directly in the Web UI of Univention Nubus and easily access the full information about the affected user. If a user reports an issue to the end-user helpdesk, administrators can now easily retrieve the technical identifiers from the Nubus Web UI and use them to search log messages.

In addition, further information such as the LDAP DN, as well as timestamps and actors for object creation and last modification, are available, together with OpenLDAP internal information such as the entryUUID. This information is available for every object stored in the directory service and can be accessed both in the Web UI and in the UDM REST API. As this information is not needed for day-to-day administration, the UI elements are located in the “Advanced settings” section within a new “Technical Information” area.

As with every release, there are many smaller changes. One noteworthy aspect is the increasing number of security-related fixes we deliver for upstream components included in Univention Nubus. We assume that AI-based analysis of open source software also impacts the software included in Nubus, and we aim to release patched versions as quickly as possible — for example, for critical findings in Keycloak that were already addressed in the Nubus 1.19.1 patch-level release. Thanks to the infrastructure we introduced to prevent supply chain attacks, we can identify and fix these issues quickly.

A cost-saving improvement for operators of larger deployments is the newly introduced configuration option for the two data volumes used by the LDAP container images. One of these volumes stores the LDAP database with all stored objects and therefore requires larger and faster storage, while the other stores only runtime data and requires only a small volume with average performance. In previous releases, it was not possible to configure different storage classes and sizes for these volumes, resulting in runtime data being placed on large, fast, and therefore expensive storage. Thanks to the new configuration options, it is now possible to choose different storage classes and reduce costs.

As always, the Release Notes provide all details, and the installation process is described in the Nubus Operations Manual.

Der Beitrag Nubus for Kubernetes 1.20: Monitoring and Observability erschien zuerst auf Univention.

22 May, 2026 11:07AM by Ingo Steuwer

22 May, 2026 02:27AM by xiaofei

For years, Android marketed itself as the antidote to Apple’s walled garden. Open. Flexible and developer friendly. That promise is now eroding—fast.

The post Google’s Lock Down Policy appeared first on Purism.

21 May, 2026 10:46PM by Purism

The recent National Bureau of Economic Research (NBER) study on effectiveness of school phone bans has reignited debate over whether restricting smartphones in schools actually helps students. Its headline result—that strict bans show “close to zero” immediate impact on test scores—has been interpreted by some as evidence that regulation doesn’t work.

The post Smartphone Study appeared first on Purism.

21 May, 2026 10:40PM by Purism

The Inspector General’s audits uncovered a systemic collapse in mobile‑device security across DHS’s Intelligence & Analysis (I&A) office and CIO organization.

The post DHS Inspector General Study appeared first on Purism.

21 May, 2026 08:36PM by Purism

California’s data broker crackdown, AI creeping into browsers, and global surveillance trends signal one truth—individual privacy are under attack. Here’s how Purism is building a future where your data stays yours.

The post Privacy Under Siege: Why Purism’s User Sovereignty Model is the Way Forward appeared first on Purism.

21 May, 2026 08:25PM by Purism

21 May, 2026 01:04PM by t.lamprecht (invalid@example.com)

21 May, 2026 12:17PM by Elmar Geese

In early 2025, internet users across Spain began experiencing something unexpected: legitimate websites going dark, developer tools becoming unreachable, and businesses losing access to their own online services. GitHub, GitLab, Docker registries, corporate websites, and e-commerce platforms all affected not by a cyberattack, not by a provider outage, but by a court-ordered IP block targeting illegal football streaming.

The mechanism was straightforward. A ruling from the Commercial Court No. 6 of Barcelona, issued in December 2024, authorised Spanish ISPs to block IP addresses identified as sources of illegal IPTV broadcasts during football matches.

The problem was that many of those addresses were Cloudflare IPs shared simultaneously by thousands of unrelated websites and services. When ISPs blocked the flagged addresses, they didn’t take down one streaming site. They took down everything behind the same shared IP.

The football piracy angle captured headlines. The infrastructure lesson is what matters for organisations operating critical services anywhere in the world.

To understand why IP-level blocking caused collateral damage at this scale, it helps to understand how large CDN and edge-delivery infrastructures work.

Cloudflare, like most major content delivery networks, operates a shared global edge infrastructure. When a company routes its traffic through Cloudflare, it passes through Cloudflare’s distributed network of nodes rather than reaching the origin server directly. From the outside, that service’s visible IP address is a Cloudflare IP pooled across many other customers simultaneously. A single IP address in a shared CDN infrastructure can front hundreds or even thousands of completely unrelated domains.

This is the multi-tenant model applied at the network edge: multiple organisations share the same underlying infrastructure resources, including IP address ranges, reverse proxy layers, TLS termination systems, and WAF infrastructure. Clients are logically separated; the network layer beneath them is not.

The consequence follows directly. Any action operating at the IP level (a court-ordered block, a blacklist entry, an ISP-level firewall rule) doesn’t target a single service. It targets every service resolving through that address. Developers found they couldn’t pull packages. Companies found their websites unreachable. None of them had any connection to the reason for the block.

It is important to note that this operational model is not unique to Cloudflare. Most large CDN, WAF, and edge delivery providers rely on shared, multi-tenant infrastructure to deliver scalability and cost efficiency globally. This architectural model is deeply embedded across much of the modern internet delivery stack.

The Spain case became a highly visible example of shared infrastructure risk in modern cloud delivery architectures.

The court order didn’t create this vulnerability. It revealed one that was already there.

Multi-tenant architecture is not inherently flawed. It became the dominant model in cloud computing for legitimate, well-understood reasons.

For providers, it enables resource pooling, centralised maintenance, elastic scalability, and cost efficiency at scale. For customers, it translates into lower entry costs, faster deployment, and freedom from managing infrastructure directly. Most organisations don’t need (or want) to operate their own edge networks, WAF layers, or global traffic distribution systems. Consuming those capabilities as a shared service makes practical and economic sense.

The issue isn’t that multi-tenancy is inefficient. The issue is that shared infrastructure creates shared operational exposure and that exposure is rarely top of mind when organisations choose SaaS platforms or CDN services.

In a multi-tenant environment, organisations can inherit operational risk from events that have nothing to do with their own services:

Under normal conditions, these risks remain invisible. Most of the time, shared infrastructure works exactly as advertised. But under stress (a security incident, a large-scale abuse event, a court order), the shared layer is precisely where failures propagate.

This is what infrastructure engineers refer to as a shared blast radius: the operational scope of an incident isn’t defined by the intended target, but by the boundaries of the shared environment.

Single-tenant architecture reverses the model. Each client operates a dedicated environment: isolated IP addresses, independent compute resources, and exclusive security policies that are not shared with other organisations.

The tradeoff has traditionally been cost and operational overhead. Dedicated infrastructure is more resource-intensive than shared SaaS deployments. That gap is why multi-tenancy became the default.

But the relevant question is not which model is architecturally superior. It is the operational risks that the organisation is prepared to carry.

For many workloads, multi-tenant SaaS remains the correct and efficient decision. For critical applications (platforms where availability directly affects revenue, SLA compliance, customer trust, or operational continuity) the calculation looks different.

Consider the practical implications. An e-commerce platform that becomes unreachable during peak sales hours due to a shared IP block loses revenue that it cannot recover. A SaaS provider whose service goes dark due to an incident involving a neighbouring tenant faces SLA breach conversations with its own customers. A financial service or healthcare platform that loses availability (even briefly, even for reasons entirely outside its control) faces reputational and regulatory consequences that extend well beyond the technical incident itself.

These aren’t theoretical edge cases. They represent the downstream business impact of shared operational exposure.

This is why infrastructure isolation is increasingly appearing in resilience discussions rather than just security or compliance contexts. The growing interest in dedicated environments, private edge infrastructure, and hybrid deployment models reflects a broader recognition: that shared platforms also mean shared operational exposure, and that for critical services, reducing that exposure is a legitimate architectural strategy, not just a premium option.

Managed single-tenant deployments (where the provider handles infrastructure operations on dedicated resources) narrow the practical gap between SaaS convenience and isolated control, without requiring organisations to operate everything themselves.

The Spain controversy highlighted something important: many organisations don’t fully understand how shared their infrastructure actually is. Before deploying critical services on SaaS or CDN platforms, the following questions are worth examining carefully.

Are IP addresses shared with other tenants? If multiple customers share the same edge IPs, reputation issues, legal restrictions, or blocking measures targeting one tenant can affect others.

What is the actual isolation boundary? Logical separation and operational isolation are not equivalent. Understanding how traffic, policies, rate limits, and security rules are enforced or shared across tenants matters for availability planning.

What is the provider’s blast radius during incidents? Every platform experiences incidents. The relevant question is how broadly those incidents propagate across the customer base.

Can another tenant’s actions affect your availability? This includes abuse-triggered IP restrictions, DDoS spillover, shared WAF rate limiting, and legal or compliance measures applied at the infrastructure level without tenant-specific granularity.

Is an isolated or hybrid deployment available? For critical services, the ability to deploy on dedicated infrastructure (on-premise, private cloud, or dedicated cloud instances) reduces dependency on shared operational models and gives organisations direct control over their exposure.

How transparent is the provider about its architecture? Providers that clearly document whether they operate as multi-tenant SaaS, isolated instances, or dedicated environments enable informed infrastructure decisions. Opacity here is itself a risk signal.

The debate in Spain will evolve through legal appeals, technical adjustments, and regulatory review. But the infrastructure question that surfaced isn’t tied to football season.

Organisations have spent years consolidating digital operations onto shared, efficient, globally distributed platforms. That consolidation brought genuine benefits: lower costs, faster deployment, and reduced operational complexity. It also created dependencies on shared infrastructure that those organisations do not control — and cannot protect themselves from when something goes wrong on the shared layer.

The Spain controversy is not fundamentally a story about piracy enforcement or internet freedom. It is a visibility event for a problem that already exists across large parts of modern cloud infrastructure: organisations are increasingly dependent on shared operational layers they neither fully control nor fully understand.

For critical services, resilience is no longer only about redundancy or scalability. It is also about understanding where infrastructure boundaries actually exist and whether an event targeting someone else can reach you through a layer you assumed was yours.

SKUDONET has extensively explored the architectural and operational differences between multi-tenant and isolated application delivery environments, particularly for organisations running critical services, APIs, or high-availability infrastructure.

21 May, 2026 09:35AM by Isabel Perez

Update Tor Browser to 15.0.14.

Remove Thunderbird.

You can still install Thunderbird as additional software.

If you have both the Thunderbird Email Client and Additional Software features of the Persistent Storage turned on, Tails automatically adds Thunderbird to your list of additional software.

A new version of Thunderbird is released in Debian shortly after each Tails release, because both Tails and Thunderbird follow the release calendar of Firefox. As a consequence, until Tails 7.5 (February 2026), the version of Thunderbird in Tails was almost always outdated, with known security vulnerabilities.

By installing Thunderbird as additional software, the latest version of Thunderbird is installed automatically from your Persistent Storage each time you start Tails.

Fix multiple security vulnerabilities in the Linux kernel and haveged, that could allow an application in Tails to gain administration privileges.

For example, if an attacker was able to exploit other unknown security vulnerabilities in an application included in Tails, they might then use one of these vulnerabilities to take full control of your Tails and deanonymize you.

For more details, read our changelog.

Automatic upgrades are available from Tails 7.0 or later to 7.8.

If you cannot do an automatic upgrade or if Tails fails to start after an automatic upgrade, please try to do a manual upgrade.

Follow our installation instructions:

The Persistent Storage on the USB stick will be lost if you install instead of upgrading.

If you don't need installation or upgrade instructions, you can download Tails 7.8 directly:

The finish line! The moment we have anticipated is finally here – PureOS Crimson is released! All devices running PureOS Byzantium will receive the PureOS Upgrade application with their regular software updates. If you’d like to install Crimson fresh, refer to our installation instructions for PCs, servers, and the Librem 5. This has been an […]

The post PureOS Crimson Development Report: April 2026 – PureOS Crimson Released appeared first on Purism.

20 May, 2026 06:48PM by Purism

I was getting “<XF86AudioPlay> is undefined” in the status bar of Emacs displayed every 2-3 seconds. Nowhere else I noticed any misbehavior or problems, and also couldn’t find any related log entries. It didn’t stop, though didn’t want to reboot my system to see whether that would fix the problem, but it was driving me nuts.

Now, as a starting point I adjusted my sway configuration, to react to the XF86AudioPlay key press event:

bindsym XF86AudioPlay exec playerctl play-pause

After reloading sway, my music player started to play for 2-3 seconds, stopped playing, started again, etc. It wasn’t a Emacs bug, but something indeed seemed to send the XF86AudioPlay key event every 2-3 seconds. It wasn’t my USB keyboard or any stuck key on it, as verified also by unplugging it. So which device was causing this?

libinput from libinput-tools to the rescue:

% sudo libinput debug-events [...] -event12 KEYBOARD_KEY +0.000s KEY_PLAYPAUSE (164) pressed event12 KEYBOARD_KEY +0.000s KEY_PLAYPAUSE (164) released event12 KEYBOARD_KEY +2.887s KEY_PLAYPAUSE (164) pressed event12 KEYBOARD_KEY +2.887s KEY_PLAYPAUSE (164) released event12 KEYBOARD_KEY +5.773s KEY_PLAYPAUSE (164) pressed event12 KEYBOARD_KEY +5.774s KEY_PLAYPAUSE (164) released [...]

The `event12` device was sending this event, what’s behind this?

% sudo udevadm info /dev/input/event12

P: /devices/pci0000:00/0000:00:1f.3/skl_hda_dsp_generic/sound/card0/input17/event12

M: event12

R: 12

J: c13:76

U: input

D: c 13:76

N: input/event12

L: 0

S: input/by-path/pci-0000:00:1f.3-platform-skl_hda_dsp_generic-event

E: DEVPATH=/devices/pci0000:00/0000:00:1f.3/skl_hda_dsp_generic/sound/card0/input17/event12

E: DEVNAME=/dev/input/event12

E: MAJOR=13

E: MINOR=76

E: SUBSYSTEM=input

E: USEC_INITIALIZED=12468722

E: ID_INPUT=1

E: ID_INPUT_KEY=1

E: ID_INPUT_SWITCH=1

E: ID_PATH=pci-0000:00:1f.3-platform-skl_hda_dsp_generic

E: ID_PATH_TAG=pci-0000_00_1f_3-platform-skl_hda_dsp_generic

E: XKBMODEL=pc105

E: XKBLAYOUT=us

E: XKBOPTIONS=lv3:ralt_switch,compose:rctrl

E: BACKSPACE=guess

E: LIBINPUT_DEVICE_GROUP=0/0/0:ALSA

E: DEVLINKS=/dev/input/by-path/pci-0000:00:1f.3-platform-skl_hda_dsp_generic-event

E: TAGS=:power-switch:

E: CURRENT_TAGS=:power-switch:

% sudo udevadm info -a /dev/input/event12 | grep -iE 'kernels|drivers|name'

KERNELS=="input17"

DRIVERS==""

ATTRS{name}=="sof-hda-dsp Headphone"

KERNELS=="card0"

DRIVERS==""

KERNELS=="skl_hda_dsp_generic"

DRIVERS=="skl_hda_dsp_generic"

KERNELS=="0000:00:1f.3"

DRIVERS=="sof-audio-pci-intel-tgl"

KERNELS=="pci0000:00"

DRIVERS==""

Behind this event12 is sof-hda-dsp Headphone, and evtest confirms that:

% sudo evtest No device specified, trying to scan all of /dev/input/event* Available devices: /dev/input/event0: AT Translated Set 2 keyboard /dev/input/event1: Sleep Button /dev/input/event10: ThinkPad Extra Buttons /dev/input/event11: sof-hda-dsp Mic /dev/input/event12: sof-hda-dsp Headphone /dev/input/event13: sof-hda-dsp HDMI/DP,pcm=3 /dev/input/event14: sof-hda-dsp HDMI/DP,pcm=4 /dev/input/event15: sof-hda-dsp HDMI/DP,pcm=5 /dev/input/event16: Yubico YubiKey OTP+FIDO+CCID /dev/input/event17: Apple Inc. Magic Keyboard with Numeric Keypad /dev/input/event18: Apple Inc. Magic Keyboard with Numeric Keypad [...] Select the device event number [0-24]: ^C

We can even get further information:

% sudo evtest /dev/input/event12

Input driver version is 1.0.1

Input device ID: bus 0x0 vendor 0x0 product 0x0 version 0x0

Input device name: "sof-hda-dsp Headphone"

Supported events:

Event type 0 (EV_SYN)

Event type 1 (EV_KEY)

Event code 114 (KEY_VOLUMEDOWN)

Event code 115 (KEY_VOLUMEUP)

Event code 164 (KEY_PLAYPAUSE)

Event code 582 (KEY_VOICECOMMAND)

Event type 5 (EV_SW)

Event code 2 (SW_HEADPHONE_INSERT) state 0

Properties:

Testing ... (interrupt to exit)

Event: time 1779295060.175766, type 5 (EV_SW), code 2 (SW_HEADPHONE_INSERT), value 1

Event: time 1779295060.175766, -------------- SYN_REPORT ------------

Event: time 1779295061.951168, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 1

Event: time 1779295061.951168, -------------- SYN_REPORT ------------

Event: time 1779295061.951194, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 0

Event: time 1779295061.951194, -------------- SYN_REPORT ------------

Event: time 1779295064.548671, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 1

Event: time 1779295064.548671, -------------- SYN_REPORT ------------

Event: time 1779295064.548689, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 0

Event: time 1779295064.548689, -------------- SYN_REPORT ------------

Event: time 1779295067.437172, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 1

Event: time 1779295067.437172, -------------- SYN_REPORT ------------

Event: time 1779295067.437187, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 0

Event: time 1779295067.437187, -------------- SYN_REPORT ------------

Event: time 1779295070.323775, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 1

Event: time 1779295070.323775, -------------- SYN_REPORT ------------

Event: time 1779295070.323790, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 0

Event: time 1779295070.323790, -------------- SYN_REPORT ------------

Event: time 1779295073.200350, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 1

Event: time 1779295073.200350, -------------- SYN_REPORT ------------

Event: time 1779295073.200373, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 0

Event: time 1779295073.200373, -------------- SYN_REPORT ------------

Event: time 1779295076.076228, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 1

Event: time 1779295076.076228, -------------- SYN_REPORT ------------

Event: time 1779295076.076250, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 0

Event: time 1779295076.076250, -------------- SYN_REPORT ------------

Event: time 1779295078.961740, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 1

Event: time 1779295078.961740, -------------- SYN_REPORT ------------

Event: time 1779295078.961754, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 0

Event: time 1779295078.961754, -------------- SYN_REPORT ------------

Event: time 1779295081.850156, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 1

Event: time 1779295081.850156, -------------- SYN_REPORT ------------

Event: time 1779295081.850175, type 1 (EV_KEY), code 164 (KEY_PLAYPAUSE), value 0

Event: time 1779295081.850175, -------------- SYN_REPORT ------------

Event: time 1779295083.306612, type 5 (EV_SW), code 2 (SW_HEADPHONE_INSERT), value 0

Event: time 1779295083.306612, -------------- SYN_REPORT ------------

So when I plug in my headphone (see the `SW_HEADPHONE_INSERT` event), the unexpected behavior starts, unplugging stops the problem.

Good! But what was totally unexpected for me: my headphone, being a Beyerdynamic DT-990 Pro, does not have any keys. 8-)

As it turned out, the headphone jack seemed to have been not entirely clean. The analog side of the jack triggers a behavior within the audio codec, where it seems to interpret the fluctuating impedance as a play button of the headset, being pressed, again and again.

I cleaned the jack of my headphone and my XF86AudioPlay problem is gone, case closed.

20 May, 2026 02:28AM by xiaofei

This week&aposs work advances on three fronts: kernel and bleedingedge alignment across Rockchip and Sunxi trees, board and platform enablement spanning RV1106 to SpacemiT, and CI hardening with self-hosted runner maintenance.

On the kernel side, bleedingedge was bumped to 7.1-rc3, accompanied by cfg80211 API fixes and re-enablement of the rtl8189fs and rtl8852bs drivers for the new release. Both the rockchip64 and sunxi patch stacks for current and edge were rewritten, an upstream ptrace fix for CVE-2026-46333 was backported to linux-rockchip, and the odroidxu4-current kernel moved to 6.6.139.

Platform enablement was broad. The Ayn Odin2 gained 7.0 kernel support, the Mekotronics R58X-Pro switched its vendor build to mainline U-Boot with a corrected LCD driver, and the H96 TV box advanced to U-Boot v2026.04. RV1106 transitioned from extlinux to a bootscript and gained DS1307, PCF85063, and RV8803 RTC drivers, while SpacemiT received OpenSBI, U-Boot, and BPI-F3 DTS fixups. Smaller but user-visible improvements include NanoPi M5 second USB3 port exposure via DRD0 host-mode pinning, NORCO EMB-3531 LPDDR4X variants, RK3528 USB2 PHY corrections for high-speed NCM, and UEFI x86 images enabling iwlwifi MLD and Intel SOF audio for MTL, LNL, and PTL.

Infrastructure work centered on self-hosted runner reliability and supply-chain hygiene. A new runner-cleanup module provides hourly disk and memory maintenance, skips busy runners, and ships via .deb, while a maintenance watchdog was added to the SDK repository. Multiple StepSecurity hardening passes landed across build and SDK workflows, though an overly strict egress-policy was subsequently reverted after breaking builds.

#Armbian #EmbeddedLinux #Rockchip #RISCV #KernelDevelopment

bleedingedge to 7.1-rc3. by @EvilOlaf in armbian/build#9806lcd_vk2c21 LCD driver that never worked; backport workingvinka,vk2c21 driver; fix Mekos DTs. by @rpardini in armbian/linux-rockchip#48219 May, 2026 12:09PM by Michael Robinson

19 May, 2026 02:30AM by xiaofei

The Supreme Court’s consideration of geofence warrants represents one of the most technically and constitutionally significant privacy cases of the modern era. The core issue is whether bulk collection of location metadata—generated by consumer devices and cloud-based services—can coexist with the Fourth Amendment’s prohibition against unreasonable searches.

The post Geofence Warrants, Location Data, and the Fourth Amendment in the Digital Age appeared first on Purism.

18 May, 2026 03:04PM by Purism

18 May, 2026 10:34AM by Greenbone AG

18 May, 2026 09:03AM by Greenbone AG

Together with Bluella, we’ve hosted a live technical webinar around a problem that many infrastructure and cybersecurity teams eventually face:

What actually happens when applications start failing under pressure?

Not in theory.

Not in a slide deck.

But in real environments, with real traffic, real attacks, and real operational stress.

From the beginning, the idea behind the session was simple: instead of talking about infrastructure problems abstractly, we wanted to show them happening live.

Throughout the webinar, we recreated several scenarios using the SKUDONET Application Delivery & Security Platform, demonstrating how modern infrastructures behave when traffic spikes, backend services become unstable, or malicious requests begin targeting exposed applications.

The session brought together professionals working across cloud, infrastructure, DevOps, and cybersecurity environments, and one of the most rewarding parts for us was the level of interaction during the event itself.

Many attendees stayed connected after the webinar officially ended to continue discussing deployment models, traffic visibility, failover strategies, and application security challenges they are currently facing in production environments.

We also want to thank the Bluella team for the collaboration throughout the entire process. From planning the session to coordinating the live demonstrations, it was genuinely a pleasure working together as partners.

Further below, you’ll also find a technical assessment designed to help teams evaluate how prepared their infrastructure really is under pressure.

Assess Your Infrastructure Readiness

One of the recurring ideas during the session was that modern infrastructure problems rarely look dramatic at the beginning.

Most incidents don’t start with systems suddenly collapsing.

Instead, they usually begin with small signs:

And in many cases, by the time teams fully understand what is happening, they are already reacting under pressure.

That’s why we wanted the webinar to focus less on theory and more on operational behaviour.

Rather than presenting isolated product features, the demonstrations focused on how load balancing, high availability, Web Application Firewall (WAF) protections, and traffic inspection work together during real infrastructure stress scenarios.

The first part of the webinar focused on Layer 4 and Layer 7 load balancing.

During the live demonstration, attendees could see how traffic was distributed dynamically across backend nodes while monitoring concurrency, health checks, and response behaviour in real time.

One interesting discussion that emerged during this section was how operational complexity continues to be a challenge in many environments.

Even today, adjusting traffic distribution policies or deploying failover mechanisms often involves fragmented tooling, manual processes, or long intervention times.

The goal of the demonstration was not simply to show traffic balancing itself, but to illustrate how quickly infrastructure teams need to react when services begin degrading under pressure.

Another important moment during the webinar came during the high availability and failover demonstrations.

Instead of explaining failover conceptually, we simulated node failure conditions live while maintaining service continuity through an active-passive cluster configuration.

What became very clear during this part of the session is that availability is no longer just an infrastructure metric.

For many organizations, even small periods of service degradation directly affect:

Modern applications are now deeply connected to business operations, which means infrastructure resilience is no longer a secondary concern.

One of the most dynamic parts of the webinar focused on DDoS mitigation and traffic behaviour under stress.

Using live traffic simulations, attendees could observe the difference between legitimate user traffic and malicious flooding attempts designed to exhaust backend resources.

What made the demonstration especially interesting was not simply the attack mitigation itself, but the visibility aspect behind it.

Because one of the biggest challenges during modern attacks is not only stopping malicious traffic.

It’s understanding what is actually happening before systems become unstable.

Many attacks today are designed to degrade infrastructure progressively rather than immediately taking services offline. Performance deteriorates slowly, observability becomes harder, and teams lose operational clarity while trying to identify the root cause.

The session showed how traffic filtering and inspection at the application delivery layer can help isolate malicious behaviour before backend services are affected.

The webinar also explored application-layer attacks such as Cross-Site Scripting (XSS) and SQL Injection.

Rather than discussing these threats abstractly, attendees could see how malicious payloads interact with exposed applications in real time and how WAF protections identify and block suspicious requests before they reach backend services.

One of the most interesting conversations during this section focused on how difficult modern attacks can be to distinguish from normal traffic patterns.

From the outside, many malicious requests initially appear legitimate.

But underneath, they may be attempting to:

This is where application visibility becomes critical.

Because modern application delivery is no longer only about distributing traffic efficiently.

It’s about understanding whether that traffic should be trusted in the first place.

Although the webinar covered load balancing, failover, DDoS mitigation, and WAF protections separately, one common theme appeared throughout the entire session: operational control.

Modern infrastructures generate enormous volumes of traffic, requests, logs, alerts, and behavioural changes.

Without visibility, teams often end up reacting blindly during incidents.

This is one of the reasons why modern Application Delivery Controllers (ADCs) like SKUDONET increasingly combine:

into a single operational layer.

The objective is not simply performance.

It’s maintaining control when infrastructure conditions become unpredictable.

For us, one of the most valuable parts of the webinar was what happened after the official presentation ended.

Several attendees stayed connected to continue discussing infrastructure architectures, deployment flexibility, hybrid environments, operational bottlenecks, and the practical challenges of maintaining application availability under increasing traffic and security pressure.

Those conversations reinforced something we see constantly across the industry:

Teams are no longer looking only for “more features”.

They are looking for:

Emilio Campos, CEO of SKUDONET, showcasing a live demo during the webinar

Emilio Campos, CEO of SKUDONET, showcasing a live demo during the webinarThe scenarios explored during the webinar reflect operational challenges that many organizations already face today from traffic spikes and Layer 7 attacks to limited visibility during incidents and increasing pressure on critical applications.

To help teams evaluate their own environments, we’ve prepared a short technical assessment inspired by the same types of real-world scenarios covered during the session:

15 May, 2026 10:25AM by Isabel Perez

15 May, 2026 03:27AM by xiaofei

14 May, 2026 05:03AM by Joseph Lee

The VyOS documentation site has carried us a long way. Sphinx + reStructuredText served the project well for years — but the world around our documentation has changed faster than our toolchain. Contributors write in Markdown. LLM coding assistants pull docs into their context windows. Reviewers expect machine-assisted feedback. AI agents need a stable, machine-readable surface to reason about VyOS configuration.

14 May, 2026 12:30AM by Yuriy Andamasov (yuriy@sentrium.io)

We have published Qubes Security Bulletin (QSB) 114: Intel CPU data exposure vulnerability. The text of this QSB and its accompanying cryptographic signatures are reproduced below, followed by a general explanation of this announcement and authentication instructions.

---===[ Qubes Security Bulletin 114 ]===---

2026-05-13

Intel CPU data exposure vulnerability

User action

------------

Continue to update normally [1] in order to receive the security updates

described in the "Patching" section below. No other user action is

required in response to this QSB.

Summary

--------

On 2026-05-12, Intel published "2026.2 IPU-Intel Processor Firmware

Advisory" (INTEL-SA-01420, CVE-2025-35979). [3] Unfortunately, this

advisory does not provide sufficient information for us to make a

definitive assessment about the extent to which this vulnerability

affects the security of Qubes OS. Based on the limited information

available, we surmise that it is likely that it might affect cross-qube

data exposure.

Impact

-------

On affected systems, an attacker who has managed to compromise one qube

can attempt to exploit this vulnerability in order to infer data

belonging to other qubes.

Affected systems

-----------------

Intel Core Ultra Series 2 and 3 processors are affected. For a more

detailed list of affected products, see Intel's "2026.2 IPU-Intel

Processor Firmware Advisory." [3]

Patching

---------

The following packages contain security updates that address the

vulnerabilities described in this bulletin:

For Qubes 4.2 and 4.3, in dom0:

- microcode_ctl version 2.1.20260512